⚡️Assessment Unlocked: Thinking beyond the prompt

If AI can draft essays, summarize research, and outline arguments in seconds, what exactly are we assessing when we assign traditional analytic tasks? This week we step into the deep water: how to design and evaluate higher-order thinking—analysis, synthesis, evaluation, creativity—when students have access to powerful generative tools.

🧠 Introduction

Higher-order thinking has always been the gold standard. Now it’s the survival skill. The presence of AI doesn’t eliminate higher-order cognition; it exposes where we were quietly measuring task completion instead of reasoning. The question is no longer “Can students produce?” but “Can students think with and beyond tools?”

Key takeaway: AI raises the floor of production. We must raise the ceiling of cognition.

📚 Background

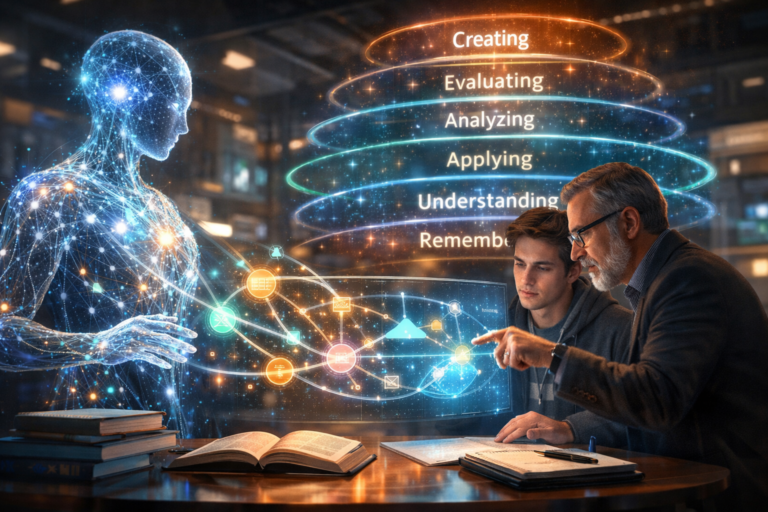

The classic framework here is A taxonomy for learning, teaching, and assessing, the revision of Bloom’s Taxonomy. It distinguishes lower-level cognitive processes (remembering, understanding) from higher-level processes (analyzing, evaluating, creating). The taxonomy was never about banning tools; it was about clarifying what kind of thinking is required.

Constructive alignment theory from John Biggs emphasizes that learning outcomes, activities, and assessment must target the same level of cognition. If we claim to assess “critical evaluation” but grade mostly on surface features, alignment breaks.

Backward design, articulated in Understanding by Design, argues that assessment should focus on enduring understandings—transferable thinking, not temporary task performance. AI tools now perform many surface tasks well. That forces us to clarify whether our assignments were actually measuring transfer and reasoning all along.

AAC&U’s VALUE rubrics reinforce this perspective, especially for outcomes like critical thinking, integrative learning, and creative thinking. These rubrics define higher-order performance in terms of evidence use, contextualization, and synthesis—not mere output volume.

The presence of AI doesn’t invalidate these frameworks. It stress-tests them. If a generative model can satisfy an assignment at the “understand” level, that may reveal the cognitive ceiling of the task—not the inadequacy of the student.

Key takeaway: AI is not erasing higher-order thinking. It is exposing where we never truly assessed it.

🛠️ Best practices & tips

Let’s get practical.

🔬 Design for transfer, not reproduction.

Ask students to apply concepts in unfamiliar contexts. Transfer—using knowledge in a novel situation—is notoriously difficult for both humans and machines. AI can generate plausible responses, but sustained adaptation to a specific context reveals real reasoning.

🧾 Require metacognitive explanation.

Metacognition means thinking about one’s thinking. Have students explain why they chose a method, how they evaluated sources, or how they revised AI-generated drafts. Reflection reveals depth.

⚖️ Assess decision-making tradeoffs.

Evaluation involves weighing alternatives. Present dilemmas with competing values and require justification of choices. The quality of reasoning matters more than the final stance.

🔄 Integrate AI as a variable, not a secret.

Ask students to critique AI output, improve it, or identify its limitations. That moves them into analysis and evaluation territory.

📊 Use rubric criteria that emphasize reasoning evidence.

Descriptors should reference justification, integration, and synthesis—not word count or stylistic polish.

Quick win: Add a short “reasoning rationale” component to any major assignment this semester.

Key takeaway: Higher-order thinking shows up in choices, tradeoffs, and explanation—not in polished prose alone.

🏫 Example or case illustration

Setting: An upper-division History course assigning a historiographical essay.

In previous years, students summarized scholarly debates competently—but depth varied. With AI widely available, the instructor redesigned the assignment.

Instead of “summarize the debate,” students were asked to:

- Use AI to generate a preliminary summary.

- Critique the AI’s framing of the debate.

- Identify one overlooked perspective.

- Propose a refined interpretive model with justification.

The friction point was anxiety: Would this legitimize AI overuse? The instructor reframed it. AI became a starting hypothesis generator, not the final thinker.

Assessment shifted toward evaluating how well students identified nuance, corrected oversimplification, and constructed defensible interpretations. The strongest submissions did not merely improve wording—they reshaped the conceptual framing.

Resolution: AI became an object of analysis rather than a shortcut.

Key takeaway: When students interrogate AI output, they practice higher-order cognition.

🔮 What’s next

Next week, we’ll examine designing authentic assessments that resist automation without banning tools—a forward-looking approach grounded in transfer and context specificity.

Prep action: Identify one assignment where output quality may no longer signal thinking quality.

❓ Question of the day

If AI can complete your assignment competently, what dimension of thinking might be under-assessed?

🚀 Call to action

Choose one course learning outcome this week and rewrite it explicitly at the analysis, evaluation, or creation level. Then adjust one rubric criterion to match.