When Surveys Actually Work: Designing Instruments That Tell the Truth

From purpose to pilot—how to build surveys that produce reliable, usable, decision‑ready insights

🌅 Introduction

Surveys can be magical—when they’re done well. They can illuminate student experiences, uncover instructional gaps, and give leaders the kind of clarity that spreadsheets alone just can’t offer. But when they’re done poorly? Well… let’s just say a dart-throwing octopus could produce cleaner data. Today’s post walks through the craft of survey design, from defining purpose to validating instruments and turning responses into meaningful insights. Whether you’re designing a quick pulse check or a full accreditation study, these principles will upgrade your approach.

⭐ Best Practices & Tips: Building Surveys That Produce Truth, Not Guesswork

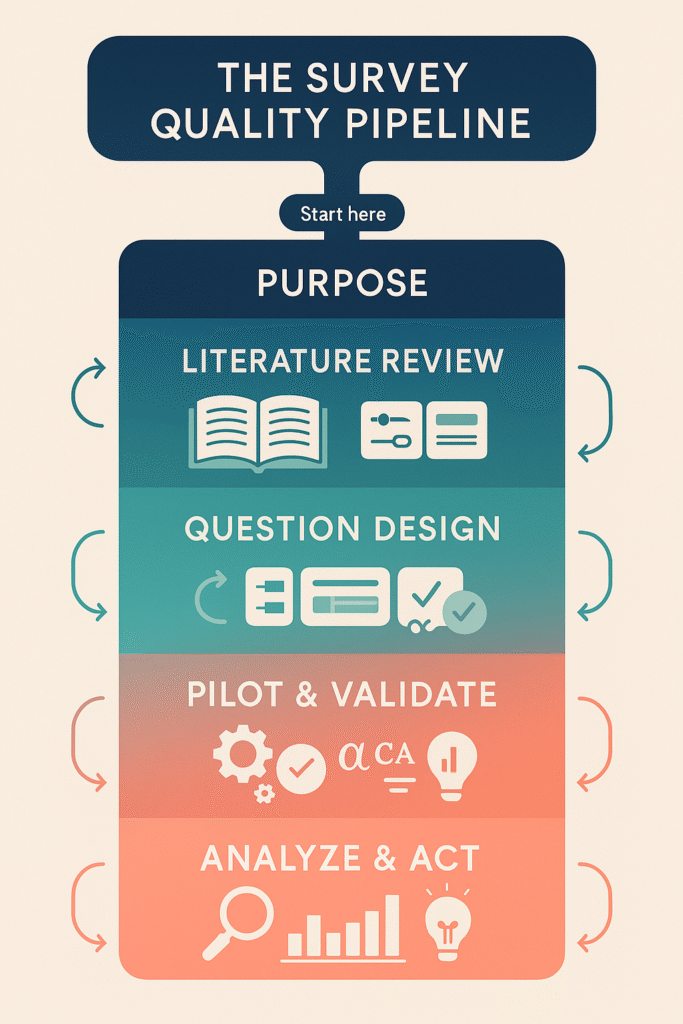

1️⃣ Begin With a Purpose, Not Questions

A strong survey starts with a clear decision point.

- Identify the exact decision the survey will inform.

- Write the purpose as a single sentence.

- Keep only what reinforces the purpose; everything else is noise.

2️⃣ Don’t Skip the Literature Review

Even small surveys benefit from grounding in research.

- Search for validated constructs (e.g., belongingness, engagement, feedback clarity).

- Borrow or adapt existing scales when possible—they carry built‑in validity.

- Note which items have been validated in higher‑ed contexts.

3️⃣ Design Questions That Behave Themselves

Good items have three qualities: clarity, specificity, and singular focus.

- Avoid double-barreled prompts (“The instructor was clear and supportive”).

- Keep language accessible at an 8th–10th grade reading level.

- Use balanced Likert scales with at least five points and symmetric anchors.

4️⃣ Pilot Like Your Data Depends on It (Because It Does)

Piloting identifies failures before they become findings.

- Test item wording, timing, branching logic, and cognitive load.

- Look for floor/ceiling effects and ambiguous items.

- Ask pilot testers to “think aloud” to reveal misinterpretations.

5️⃣ Validate & Analyze Systematically

Even internal surveys deserve rigor.

- Check reliability (Cronbach’s alpha, McDonald’s omega).

- Examine factor structure if measuring multiple constructs.

- Use LLMs to summarize qualitative comments, cluster themes, or spot contradictions.

- Present results with limitations to maintain integrity.

🧩 Case Illustration: The “Great Course Feedback Makeover”

A community college struggled with student course evaluations that read like a collection of vague impressions. Faculty felt they offered little value, and administrators worried the data couldn’t support improvement decisions.

A redesign team—faculty, institutional researchers, and assessment specialists—began by reframing the purpose: “Provide actionable feedback on course design, instructor practices, and learning support.”

Phase 1: Literature Review

They identified three validated constructs commonly used in higher ed:

- Instructional clarity

- Course organization

- Learning support and accessibility

The team adapted items from existing instruments rather than starting from scratch.

Phase 2: Drafting & Cognitive Interviews

Students were invited to think aloud as they read sample questions. They caught issues faculty had missed: ambiguous time references, jargon, and unclear anchors.

For example:

- Original: “The instructor communicated expectations clearly.”

- Revised: “I understood what I needed to do to succeed in this course.”

Students consistently preferred the revised version because it was concrete and student‑centered.

Phase 3: Piloting & Validation

The pilot survey went out to 600 students across eight departments.

- Reliability for the clarity scale improved from α = .62 to α = .84.

- Exploratory factor analysis confirmed a three‑factor structure that aligned with the design.

- LLM-assisted analysis on open‑ended comments revealed new themes around “pace,” “example quality,” and “feedback timing”—all actionable.

Phase 4: Implementation & Insights

Once deployed institution‑wide, faculty found the data more precise and genuinely helpful. Students noted the survey was easier to understand and quicker to complete. Most importantly, the new evaluation supported course redesigns that directly improved student engagement the following year.

📊 The Survey Quality Pipeline

🌻 Closing

Great surveys aren’t just forms—they’re measurement instruments. When crafted with intention, grounded in evidence, and refined through piloting and validation, they become some of the most powerful tools campus leaders have for understanding experiences, improving instruction, and guiding strategic decisions. Whether you’re conducting program evaluation, assessing teaching effectiveness, or gathering student voice, survey design is one of the most high‑leverage skills in higher education.

Next week we’ll pivot to Evaluation & Mentoring, exploring logic models, real-world evaluation frameworks, and how to build cultures where continuous improvement genuinely sticks.

💬 Question of the Week

If you redesigned one survey on your campus tomorrow, which decision would you want its results to inform—and what would you change first?